How Much AI Code Is Actually in Your Codebase?

Levi Garner

Founder & CTO, InteliG

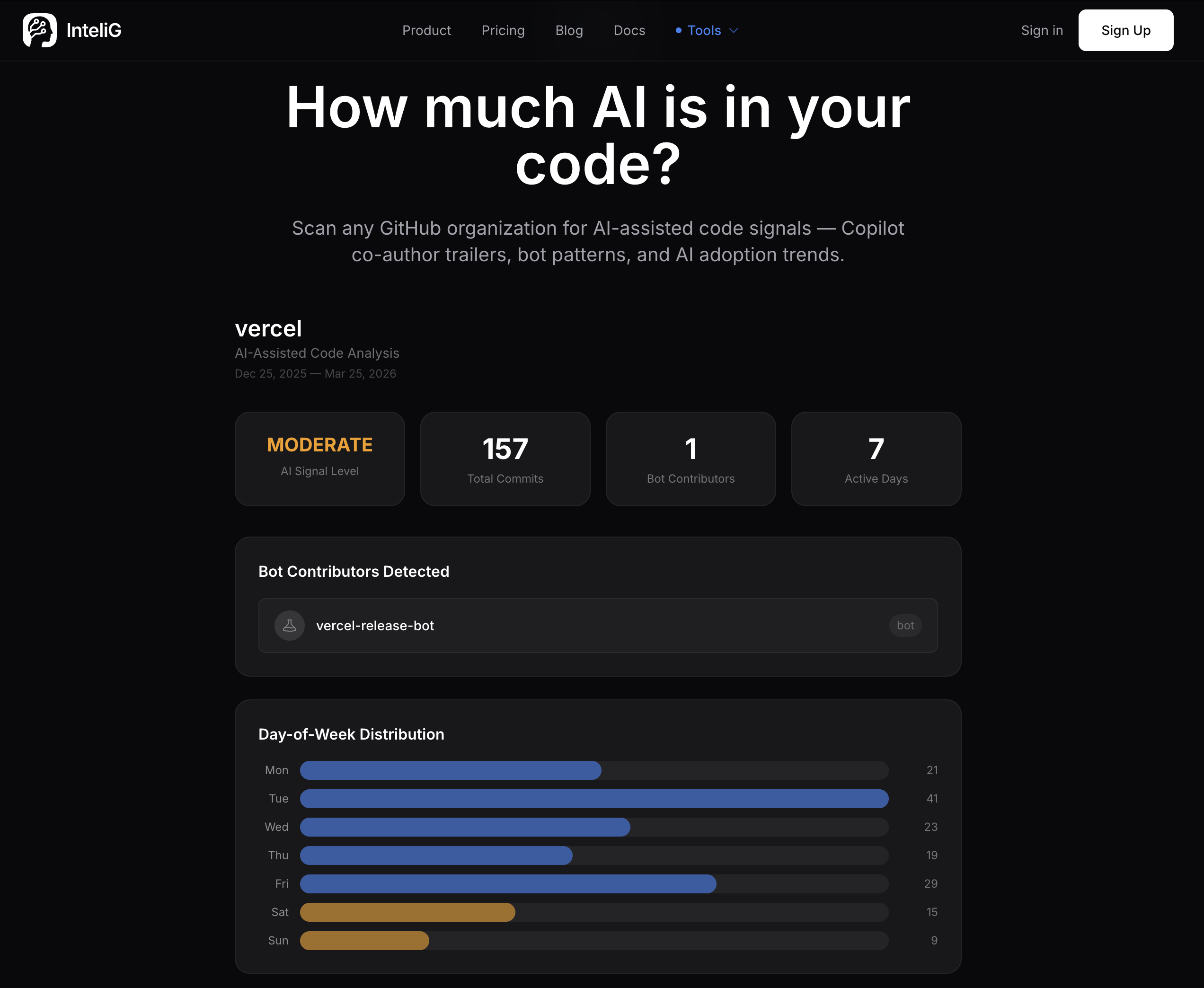

TLDR: We scanned Vercel’s GitHub org and found a MODERATE AI signal level — an estimated 22-31% AI-assisted code, 1 bot contributor detected, and a clear evening coding pattern that suggests Copilot/Cursor usage. Check your number → intelig.ai/tools/ai-attribution

Every CTO I talk to asks the same question: “How much of our code is actually written by AI?”

Nobody can answer it.

They know their team uses Copilot. They’ve seen Cursor and Devin demos. They suspect the number is higher than they think. But they’ve never actually measured it.

The signal is already there

We pointed our AI Code Attribution Scanner at Vercel — one of the most respected engineering organizations in open source. The result: MODERATE AI signal level. An estimated 22-31% of code shows AI-assisted patterns. One bot contributor detected (vercel-release-bot). And a telling temporal pattern — heavy commit concentration between 6-10 PM, which is a known behavioral signature of developers using Copilot and Cursor during extended evening sessions.

That’s Vercel. A well-run, disciplined engineering org. The number is only going up.

Bot accounts pushing dependency updates, release automation, and boilerplate generation. Copilot co-authorship trailers in commit metadata. Evening coding bursts that correlate with AI-assisted workflows. It adds up fast.

Why this matters for CTOs

This isn’t about whether AI code is good or bad. It’s about knowing what you’re shipping.

Audit risk. If a client or regulator asks what percentage of your codebase was AI-generated, can you answer? SOC 2 auditors are starting to ask. Enterprise buyers definitely will.

Quality signal. AI-generated code that gets merged without meaningful review is a different risk profile than code a senior engineer wrote and tested. You need to know the ratio.

Team velocity distortion. If 60% of your commits are AI-authored, your “engineering velocity” metrics are lying to you. Lines of code, commit counts, PR throughput — all inflated by machines. You’re measuring bot productivity, not human impact.

IP implications. The legal landscape around AI-generated code is still evolving. At minimum, you should know your exposure.

What the scanner actually does

Point it at any public GitHub organization. It analyzes commit metadata, author patterns, co-authorship tags, and bot signatures across all repositories.

In about 60 seconds, you get:

- AI signal level — LOW, MODERATE, or HIGH across the org

- Bot contributors detected — with specific names and commit counts

- Day-of-week distribution — weekday vs weekend coding patterns (blue vs amber bars)

- Hour-of-day heatmap — commit activity by hour, with peak window identification

- AI narrative — Cognis analyzes the patterns and estimates the real AI-assisted percentage

No authentication required for public orgs. No sign-up. Just paste the URL and get data.

The conversation you need to have

The question isn’t whether your team should use AI coding tools. They already are.

The question is whether you know how much, where, and what the implications are.

Most engineering leaders are flying blind on this. They approved Copilot licenses, maybe experimented with Devin or Cursor, and assumed everything was fine. Meanwhile, the composition of their codebase shifted dramatically and nobody noticed.

This is a board-level question now. “What percentage of our code is AI-generated?” If you can’t answer it, you have a visibility gap.

Try it yourself

The scanner works on any public GitHub organization. Pick your favorite open-source company and see what the number actually is.

But your own org’s number — that’s the one that matters.

See What Your Engineering Org Is Really Doing

InteliG reads your repos, analyzes every commit, and gives you the execution intelligence CTOs actually need.

Start Your Trial